The Hidden Cost of Data Quality in AI: From Traditional ML to Autonomous Agents

Introduction

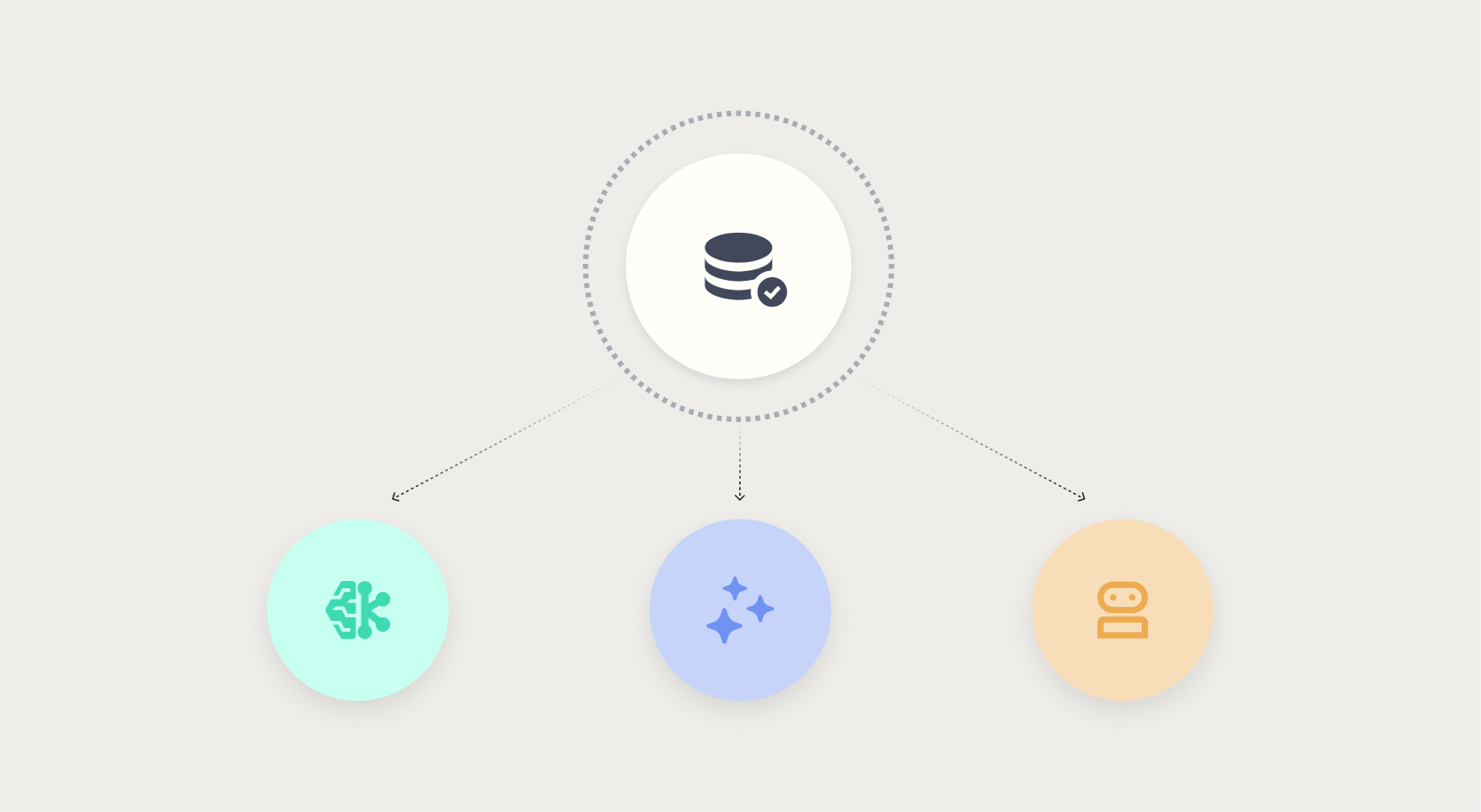

Data quality is the silent foundation of any successful artificial intelligence project. Yet time and again, organizations invest heavily in algorithms and infrastructure while neglecting the integrity of the data feeding those systems. The result is not malicious, but costly: pricing models that drift by millions, chatbots that confidently mislead customers, and autonomous agents that commit resources based on incomplete facts. Understanding how data quality failures manifest across different AI paradigms—traditional machine learning, generative AI, and agentic AI—is essential for building trustworthy systems.

The Role of Data Quality in Traditional Machine Learning

In traditional machine learning, data quality issues are often visible and containable. A regression model may output an incorrect prediction, a classification algorithm may label a transaction fraudulently, but these errors typically appear on a dashboard or in a report. A human analyst spots the anomaly, investigates the underlying data, and triggers a retraining cycle. The damage is usually limited to the specific prediction batch and can be corrected before it propagates.

For example, consider a retail pricing model that uses historical sales data. If a data entry error introduces a $2.3 million margin shortfall, that error is likely to be caught during a monthly review. The model is retrained with corrected data, and the financial impact is reversed. The key characteristic here is visibility: the failure surface is within human-monitored metrics, and the feedback loop allows for remediation.

However, even in traditional ML, undetected data quality issues lead to model drift over time. Stale features, missing values, or inconsistent units degrade performance gradually. Organizations that lack robust data monitoring often discover these issues only after significant business impact. The familiar relationship between machine learning and data quality is one of periodic checks and corrections, but this approach becomes insufficient as AI systems gain autonomy.

Why Generative AI Demands Better Data

Generative AI breaks the containment of traditional ML. Large language models (LLMs) and image generators are trained on vast corpora, but their outputs are only as reliable as the retrieval contexts they reference. When a chatbot pulls from a stale knowledge base, it delivers a confident, polished answer that may be factually incorrect. Unlike a dashboard number that screams “error,” a wrong answer from a generative AI model can appear plausible and authoritative.

Consider a customer support chatbot that relies on product documentation updated last year. A user asks about current warranty terms, and the bot—operating exactly as designed—returns the old terms. There is no red flag, no anomalous metric. The customer proceeds based on incorrect information, leading to dissatisfaction and potential legal issues. The flaw lies not in the model but in the data it was allowed to use.

Data quality for generative AI extends beyond accuracy to include recency, relevance, and bias. A model trained on biased text will reproduce those biases in its outputs. Stale data leads to hallucinations dressed as facts. Unlike traditional ML, where a single bad prediction can be traced back to a specific data point, generative AI errors are often distributional: they emerge from the interplay of training data, retrieval context, and prompt engineering. Detecting these failures requires new approaches, such as ground-truth verification and retrieval-augmented generation (RAG) with quality gates.

Agentic AI: When Data Failures Become Autonomous

The most alarming frontier is agentic AI—systems that take actions on behalf of users or organizations without direct human oversight. An autonomous procurement agent, for instance, may negotiate contracts, place orders, and allocate budgets based on supplier data. If that data contains incomplete vendor profiles or outdated pricing, the agent commits resources under false premises. By the time a human reviews the transactions, the budget is already spent.

What differentiates agentic AI from generative AI is action. A chatbot that gives a wrong answer can be corrected after the fact; an agent that executes a bad decision may cause irreversible financial or operational damage. The tolerance for data quality failures shrinks to near zero. Moreover, the failure signals are weaker: the agent may appear to operate perfectly on bad data, with no dashboard alert or outlier detection. The system’s own “confidence” metrics can be misleading because the model has no awareness of data provenance.

For example, an agentic AI in supply chain management might prioritize a supplier with outdated lead times, causing production delays. The agent followed its optimization logic correctly; the data was simply wrong. The cost of such failures scales with the autonomy and reach of the agent. As organizations deploy agents for tasks ranging from customer acquisition to financial trading, the need for meticulous data quality assurance becomes paramount.

Strategies for Ensuring Data Quality Across AI Paradigms

Continuous Monitoring and Validation

Traditional batch checks are no longer sufficient. Every AI system must have real-time data quality dashboards that track freshness, completeness, consistency, and accuracy. For generative AI, this includes monitoring retrieval sources for staleness. For agentic AI, it means logging every data point used in decision-making and flagging deviations from expected ranges.

Ground-Truth Layers for LLMs

Implement a retrieval-augmented generation (RAG) architecture with a curated knowledge base that is versioned and reviewed. Use a separate validation model to check outputs against known facts before they reach the user. This creates a data quality gate that prevents erroneous information from being delivered.

Deterministic Guardrails for Agents

For agentic AI, introduce hard constraints on actions based on data confidence. For example, if supplier lead time data is older than 30 days, require human approval before any purchase commitment. These guardrails act as a safety net when data quality cannot be guaranteed real-time.

Data Provenance and Lineage

Track the origin, transformation, and usage of every data element that influences AI decisions. This is especially critical for agentic AI, where the chain of reasoning may involve multiple data sources. Tools like data catalogs and lineage graphs help pinpoint the root cause when failures occur.

Cross-Functional Data Governance

Data quality is not solely an IT responsibility. It requires collaboration between domain experts, data engineers, AI modelers, and business stakeholders. Establish clear ownership for each data domain and conduct regular audits of data fitness for AI use cases. As AI moves from prediction to action, the business impact of poor data increases, making governance a strategic priority.

Conclusion

Nobody sets out to build a bad AI model. But good intentions do not compensate for data that looks clean until it fails. The evolution from traditional machine learning to generative AI and agentic AI demands a parallel evolution in data quality practices. In traditional ML, failures are often visible and containable. In generative AI, they hide behind confident-sounding but wrong outputs. In agentic AI, they lead to autonomous actions with no safety net. By understanding these different risk profiles and implementing proactive data quality strategies—continuous monitoring, ground-truth layers, deterministic guardrails, and robust governance—organizations can build AI systems that are not only powerful but trustworthy.